The Challenges of Building a Fair AI Memory Benchmark

Evaluating AI memory systems is harder than it looks. Static Q&A datasets do not reflect how autonomous coding agents actually work. Here is how we built an open-source, multi-session benchmark that exposed a critical flaw in existing memory tools, and what the results actually mean.

Why Standard Benchmarks Fall Short

Most memory benchmarks test simple retrieval: "The user's favorite color is blue. What is the user's favorite color?" That is not a useful test for a coding agent.

Real software engineering is non-linear. In session one, the team picks a monolithic architecture. In session two, they switch to microservices. In session three, they partially revert. A good memory system must track the current state of each decision independently, not just surface the most recent text that mentions the topic.

The popular LoCoMo benchmark (Long-Context Memory) was a starting point, but it was designed for personal assistant use cases. We adapted it for multi-session software engineering workflows where the stakes are higher and the data is more structured.

Designing a Level Playing Field

We gave both Memstate and Mem0 every possible advantage to make the comparison fair:

- Same agent model: Claude Sonnet 4.6 for all runs. The LLM was not the variable being tested.

- Custom system prompts: Each tool was allowed its own system prompt prefix explaining how to use its MCP tools most effectively. Neither system had an unfair instruction advantage.

- 20 runs per scenario: Each scenario was run 20 times per system to account for LLM non-determinism. All scores are averages.

- Open source: All scenario prompts, scoring scripts, and raw result files are published on GitHub. Anyone can reproduce the results.

The scoring weights reflect what matters most for coding agents: fact recall accuracy (40%), conflict detection (25%), cross-session continuity (25%), and token efficiency (10%). Token efficiency is weighted low on purpose. A system that retrieves nothing uses zero tokens but is useless.

The 5 Scenarios

These are not toy tests. Each scenario is a multi-session software engineering project where the agent starts every session with a blank context window and must rely entirely on its memory system to reconstruct what it knows. Requirements change. Decisions reverse. The agent must track the current truth, not just the most recent text.

Web App Architecture Evolution

HardThe agent worked on a full-stack app across 6 sessions. Architecture changed from monolith to microservices, then partially reverted to a modular monolith for auth and billing only. The agent had to track the current architecture per service, not just the most recent global decision.

Auth System Migration

Very HardStarted with JWT auth. Migrated to session cookies, added OAuth for social login, then reverted API clients back to JWT. The agent had to correctly recall which auth strategy applied to which client type at any given point.

Database Schema Evolution

Very HardA PostgreSQL schema evolved across 7 sessions. Tables added, columns renamed, data types changed, two tables merged. Mid-migration questions like "Does the orders table still have a status_code field?" required knowing the exact schema state at each point, not just the final version.

API Versioning Conflicts

ExtremeThree concurrent API versions (v1, v2, v3) maintained across 8 sessions. Breaking changes in v2 did not apply to v1. v3 deprecated several v2 endpoints. The agent had to recall version-specific constraints and identify when a question about "the API" needed version clarification.

Team Decision Reversal

ExtremeFour major architectural decisions were made and reversed across 6 sessions: message queue (Kafka, RabbitMQ, back to Kafka), deployment target (ECS, Kubernetes, back to ECS), ORM (Prisma then Drizzle), caching (Redis, in-memory, back to Redis). The agent had to recall the current decision for each area without conflating it with a previous state.

Why Is the Gap So Large?

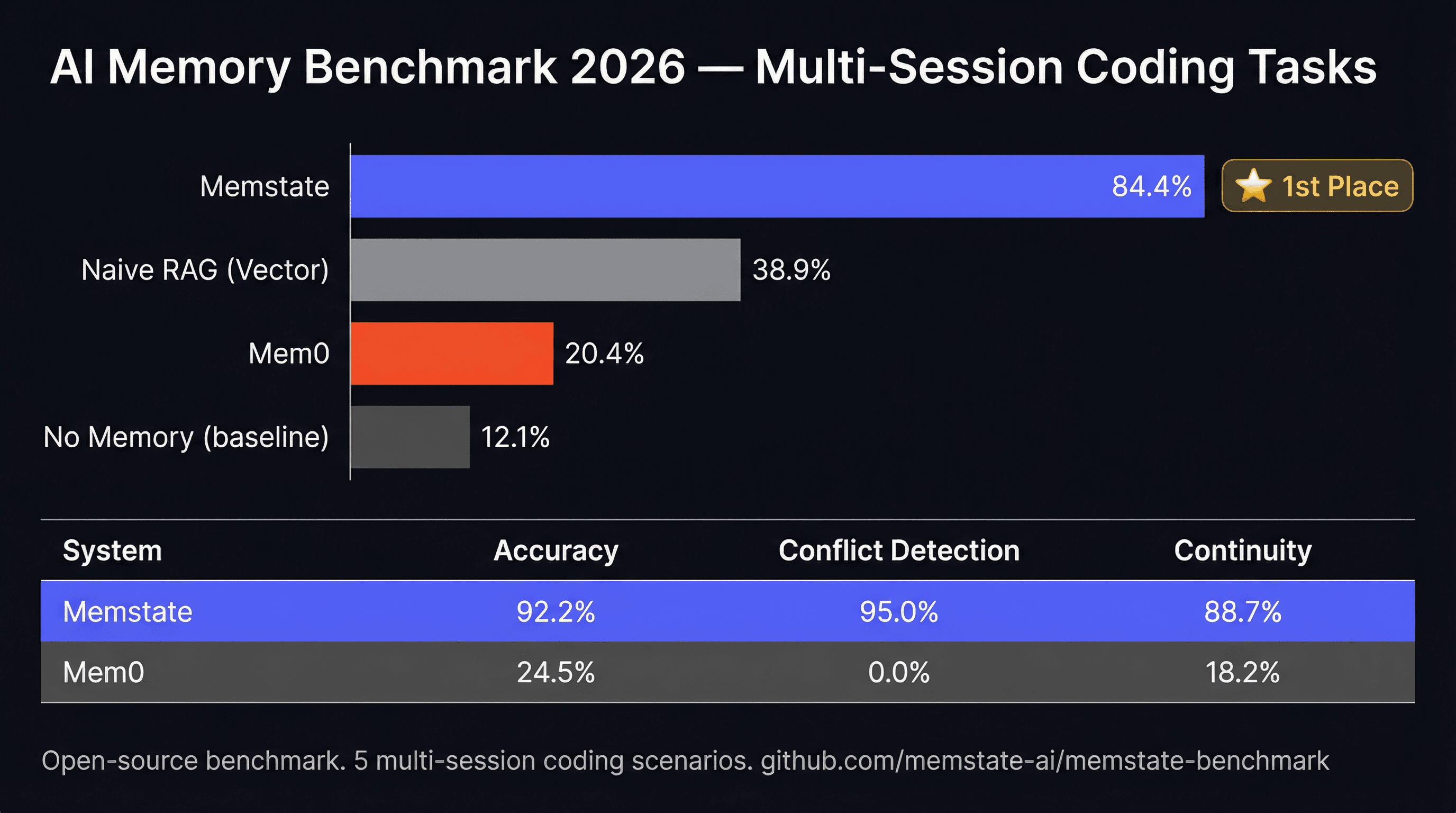

The 5.3x accuracy gap is not primarily about retrieval quality. It is about architecture. Three things explain most of it:

1. Conflict detection (Memstate 95% vs Mem0 20.2%)

When a fact changes, Mem0 keeps both the old and new version in its vector store. The agent retrieves both and has to guess which is current. It often gets it wrong. Memstate versions every fact and always returns the current value. This single difference accounts for a large portion of the accuracy gap, especially in the Auth Migration and API Versioning scenarios.

2. Structured vs unstructured retrieval (Memstate 92.16% vs Mem0 17.5%)

Vector similarity search returns the N most similar text chunks to a query. For precise factual questions like "What is the current database port?", this is unreliable. The answer might be buried in a paragraph about something else, or the wrong version of the answer might score higher on similarity. Memstate stores facts as typed key-value pairs and returns exact matches.

3. Cross-session continuity (Memstate 88.74% vs Mem0 17.21%)

Vector stores degrade over time as more memories accumulate. Relevant facts get pushed down by newer, less relevant ones. Memstate's structured model does not degrade. Each fact is independently addressable regardless of how many other facts exist.

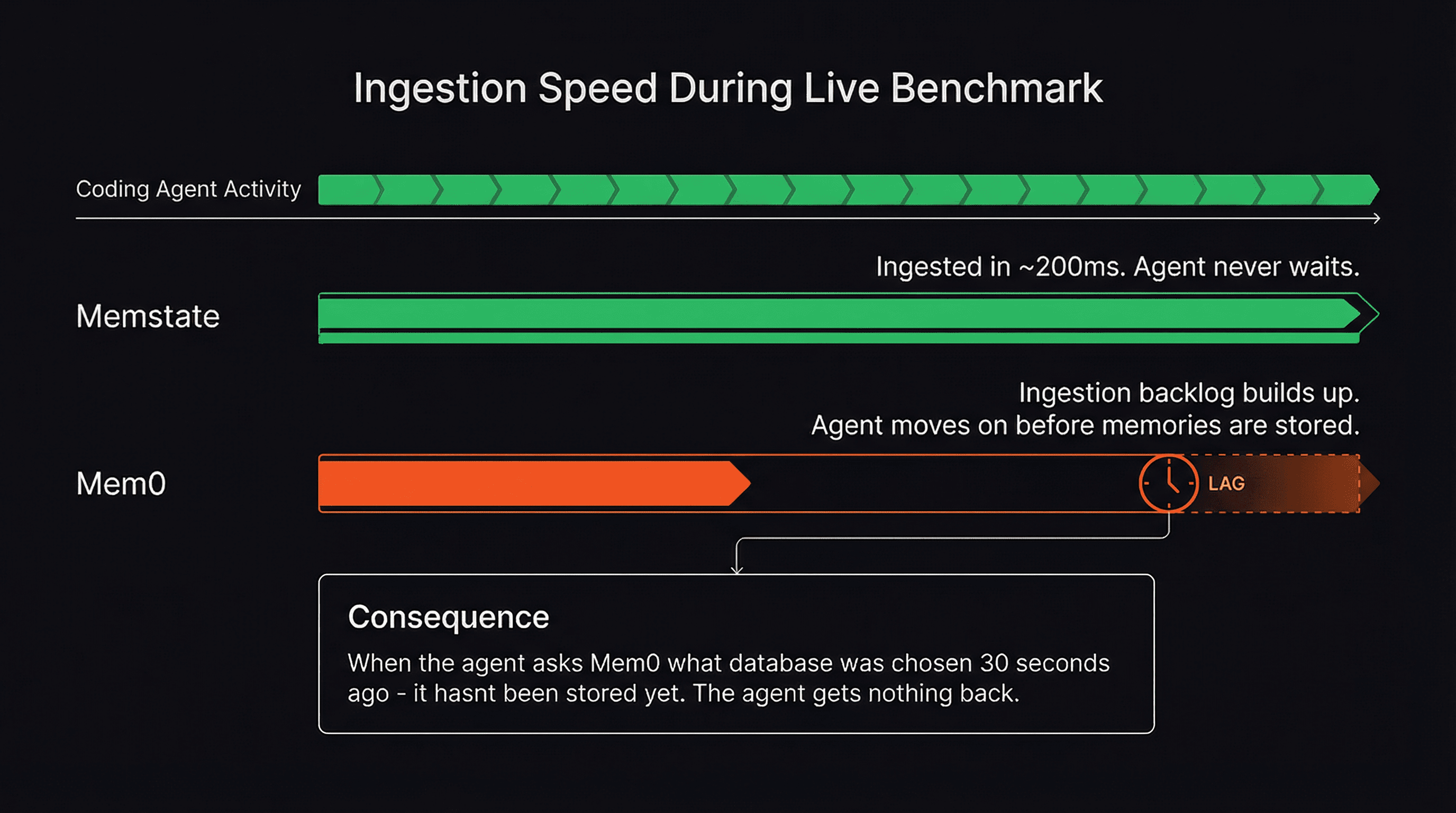

The Surprise Finding: Ingestion Speed

During early runs, we let the coding agents move as fast as they wanted. This exposed a vulnerability in tools like Mem0 that we had not anticipated: ingestion latency.

When an agent finishes a task, it sends a summary to the memory system. If the memory system takes 5-10 seconds to process and index that memory, the agent has already moved on to the next task. When it immediately queries for context on the next step, the facts it just saved are not there yet.

In early test runs, Mem0 was effectively running blind during fast-paced sessions because its ingestion pipeline could not keep up. We adjusted the methodology to give both systems adequate time between sessions, but this finding is worth noting for anyone running agents in real-time workflows where speed matters.

Memstate handles this because our custom-trained fact extraction models are optimized for speed. Memories are ingested and queryable in milliseconds, so the agent never outpaces its own memory.

What We Did to Keep It Fair

- Same agent model (Claude Sonnet 4.6) for all systems across all runs.

- Custom system prompt prefixes allowed for each tool. No unfair instruction advantage.

- 20 runs per scenario per system. All scores are averages to account for LLM variance.

- Token efficiency weighted at 10% only. We did not want to reward systems that retrieve nothing.

- All scenario prompts, scoring scripts, and raw results are open source and reproducible.

Verify the Results Yourself

We believe in full transparency. The entire benchmark suite, including all scenarios, prompts, evaluation scripts, and raw result files, is open source. Run it yourself, add new systems, or adapt it for your own use case.

View Benchmark Repository on GitHubRelated Reading

Build with reliable memory

The benchmark winner is free to start. Setup takes 30 seconds.